The first case allows batching many input images together, to model use cases like inference in the cloud where thousands of users submit images every second. The paper looks at two cases for each network. To cover a range of possible inference scenarios, the NVIDIA inference whitepaper looks at two classical neural network architectures: AlexNet (2012 ImageNet ILSVRC winner), and the more recent GoogLeNet (2014 ImageNet winner), a much deeper and more complicated neural network compared to AlexNet. In general, we might say that the per-image workload for training is higher than for inference, and while high throughput is the only thing that counts during training, latency becomes important for inference as well. To minimize the network’s end-to-end response time, inference typically batches a smaller number of inputs than training, as services relying on inference to work (for example, a cloud-based image-processing pipeline) are required to be as responsive as possible so users do not have to wait several seconds while the system is accumulating images for a large batch. It is common to batch hundreds of training inputs (for example, images in an image classification network or spectrograms for speech recognition) and operate on them simultaneously during DNN training in order to prevent overfitting and, more importantly, amortize loading weights from GPU memory across many inputs, increasing computational efficiency.įor inference, the performance goals are different.

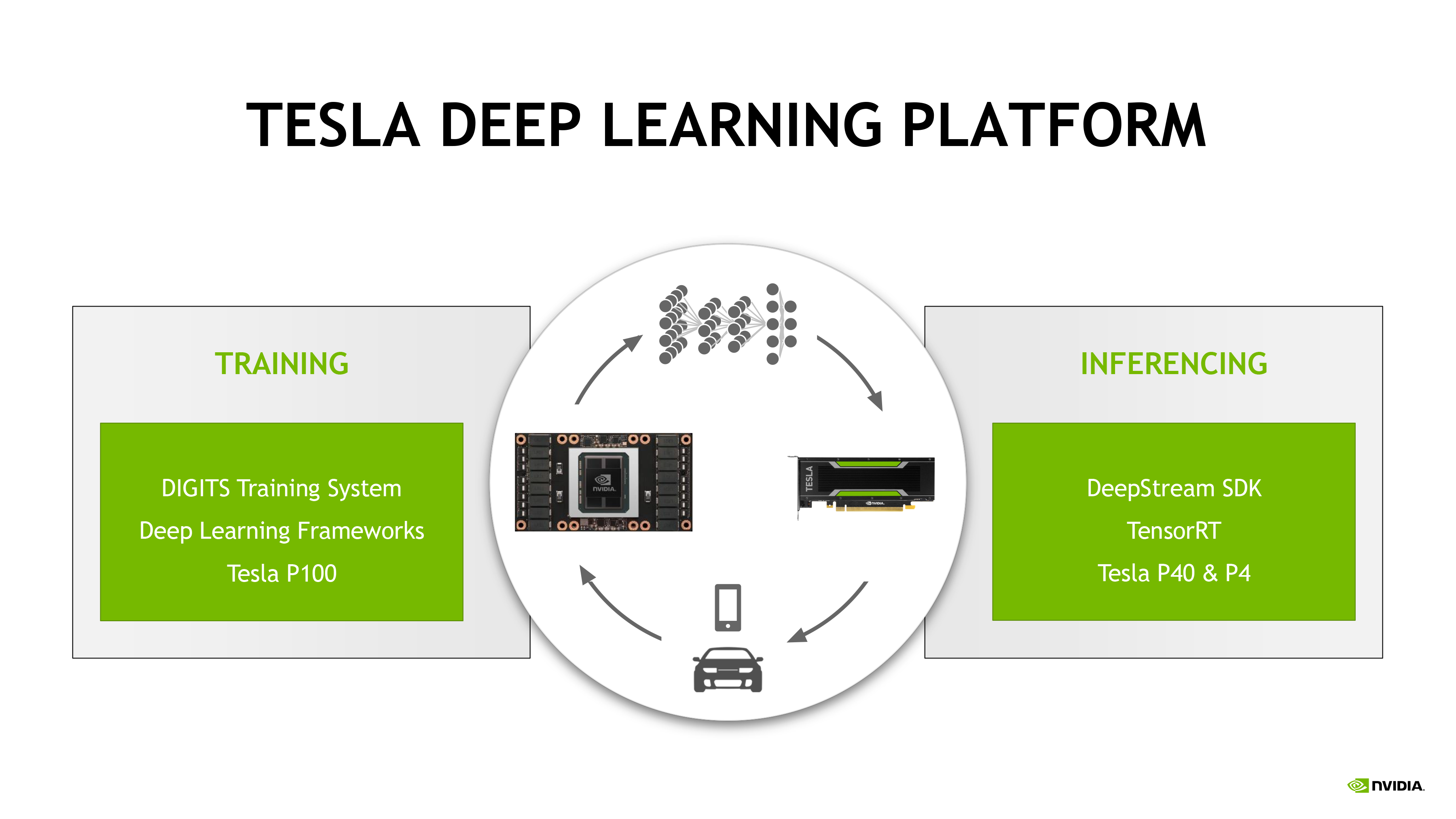

A backward propagation phase propagates the error back through the network’s layers and updates their weights using gradient descent in order to improve the network’s performance at the task it is trying to learn. As Figure 1 illustrates, after forward propagation, the results from the forward propagation are compared against the (known) correct answer to compute an error value. Inference versus Trainingīoth DNN training and Inference start out with the same forward propagation calculation, but training goes further. 3.9 images/second/Watt) than the state-of-the-art Intel Core i7 6700K.

242 images/second) and much higher energy efficiency (45 vs. The NVIDIA Tegra X1 SoC also achieves impressive results, achieving higher performance (258 vs. In particular, the NVIDIA GeForce GTX Titan X delivers between 5.3 and 6.7 times higher performance than the 16-core Intel Xeon E5 CPU while achieving 3.6 to 4.4 times higher energy efficiency. The results show that GPUs provide state-of-the-art inference performance and energy efficiency, making them the platform of choice for anyone wanting to deploy a trained neural network in the field. A new whitepaper from NVIDIA takes the next step and investigates GPU performance and energy efficiency for deep learning inference. It is widely recognized within academia and industry that GPUs are the state of the art in training deep neural networks, due to both speed and energy efficiency advantages compared to more traditional CPU-based platforms. In inference, the trained network is used to discover information within new inputs that are fed through the network in smaller batches. In training, many inputs, often in large batches, are used to train a deep neural network. Figure 1: Deep learning training compared to inference. Then, the network is deployed to run inference, using its previously trained parameters to classify, recognize and process unknown inputs. On a high level, working with deep neural networks is a two-stage process: First, a neural network is trained: its parameters are determined using labeled examples of inputs and desired output. Deep learning is revolutionizing many areas of machine perception, with the potential to impact the everyday experience of people everywhere. At 45 images/s/W, Jetson TX1 is super efficient at deep learning inference.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed